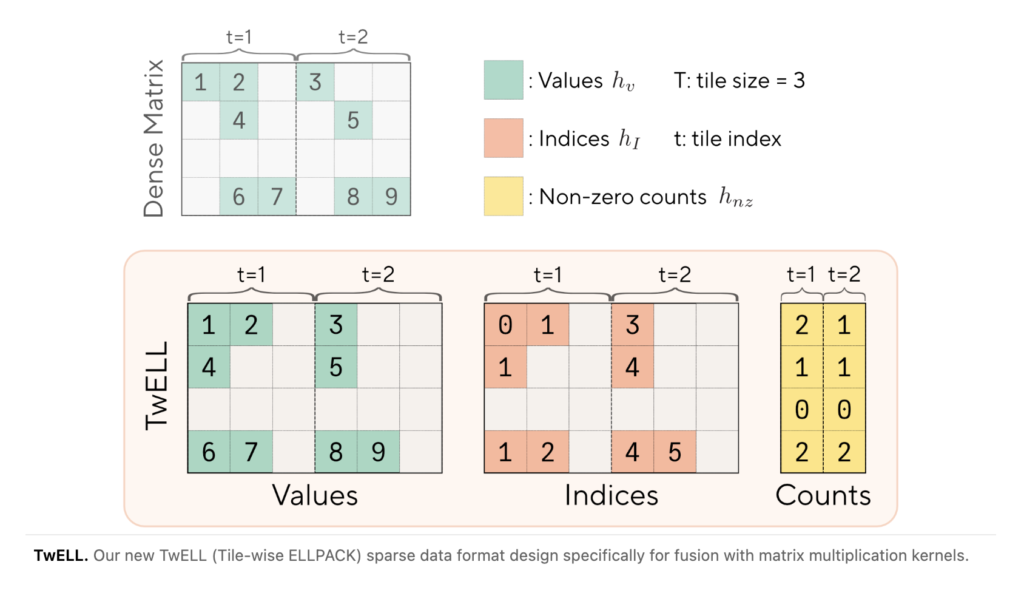

Sakana AI and NVIDIA Introduce TwELL with CUDA Kernels for 20.5% Inference and 21.9% Training Speedup in LLMs

Scaling large language models (LLMs) is expensive. Every token processed during inference and every gradient computed during training flows through feedforward layers that account for over two-thirds of model parameters and more than 80% of total FLOPs in larger models. A team researchers from Sakana AI and NVIDIA have worked on a new research that […]