Liquid AI Releases LFM2.5: A Compact AI Model Family For Real On Device Agents

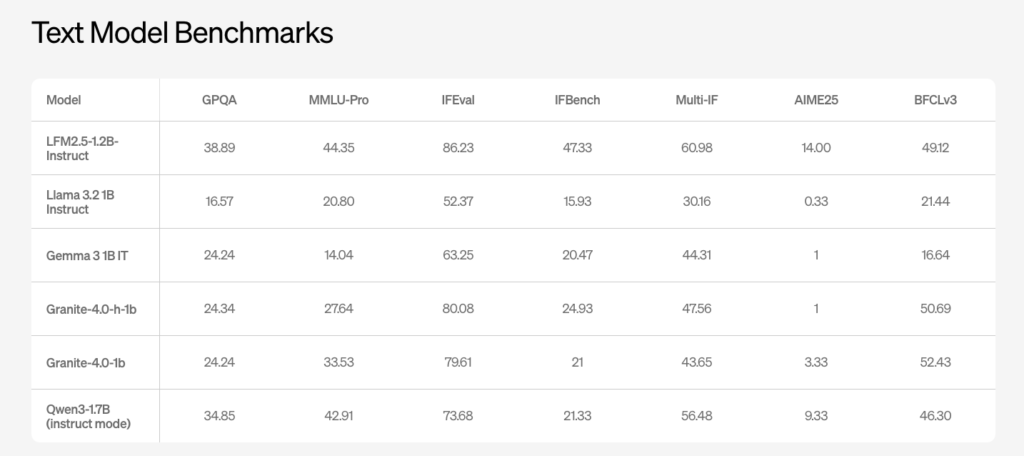

Liquid AI has introduced LFM2.5, a new generation of small foundation models built on the LFM2 architecture and focused at on device and edge deployments. The model family includes LFM2.5-1.2B-Base and LFM2.5-1.2B-Instruct and extends to Japanese, vision language, and audio language variants. It is released as open weights on Hugging Face and exposed through the […]

Liquid AI Releases LFM2.5: A Compact AI Model Family For Real On Device Agents Read More »